MSU Video Codecs Comparison 2023-2024

Part 1, 2: FullHD Objective/Subjective

Eighteenth Annual Video-Codecs Comparison by MSU

|

|||||||||

|

|

|

|

||||||

| compression.ru |

Lomonosov Moscow State University (MSU) Graphics and Media Lab |

Dubna International

State University |

MSU Institute of Advanced Studies of Artificial Intelligence and Intelligent Systems |

||||||

News

- 02.08.2024 Release of the comparison

Navigation

- Results

- Download/Buy report

- Participated codecs

- Rules

- Subjective methodology

- Features

- Thanks

- Contact information

Results

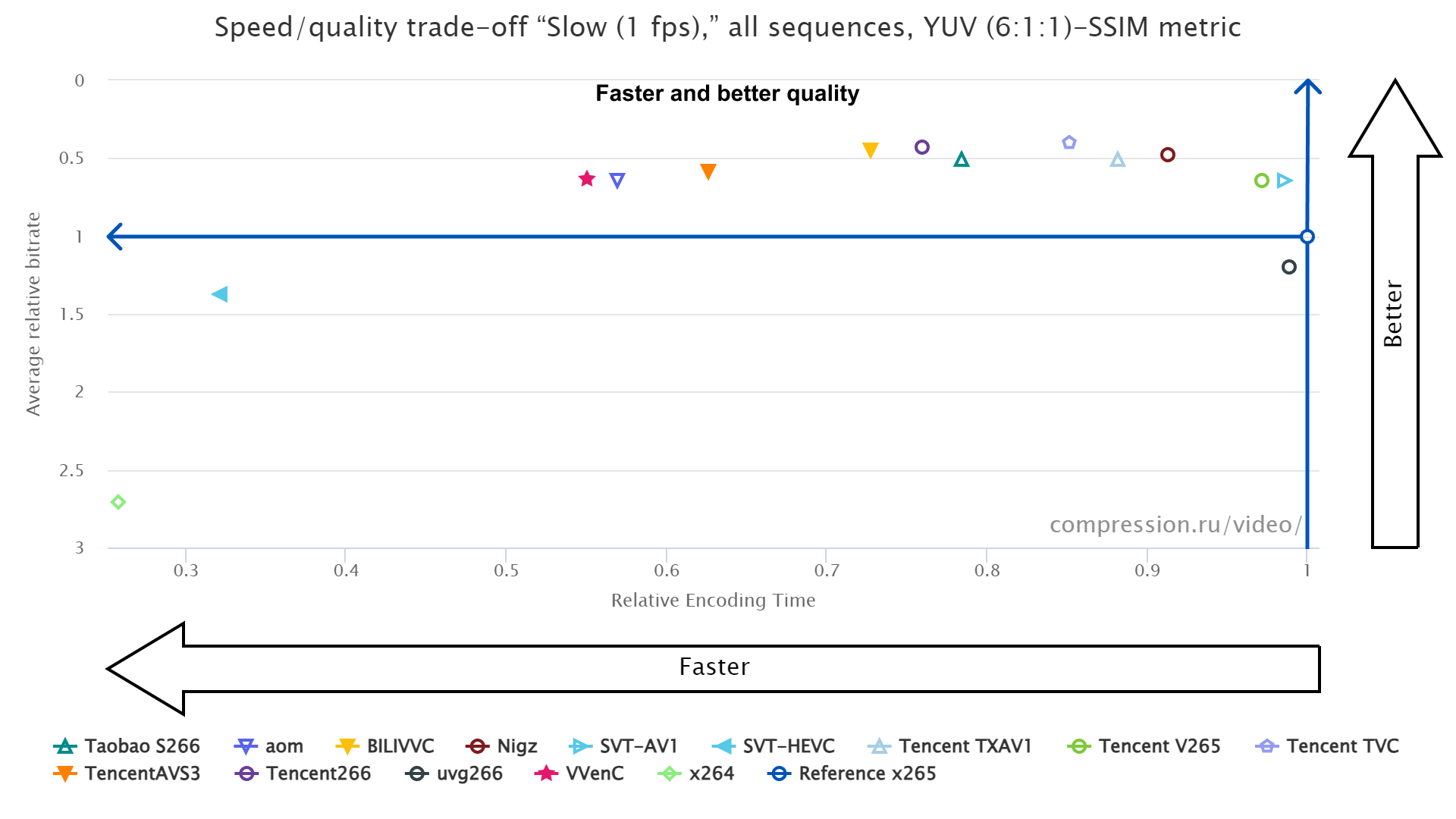

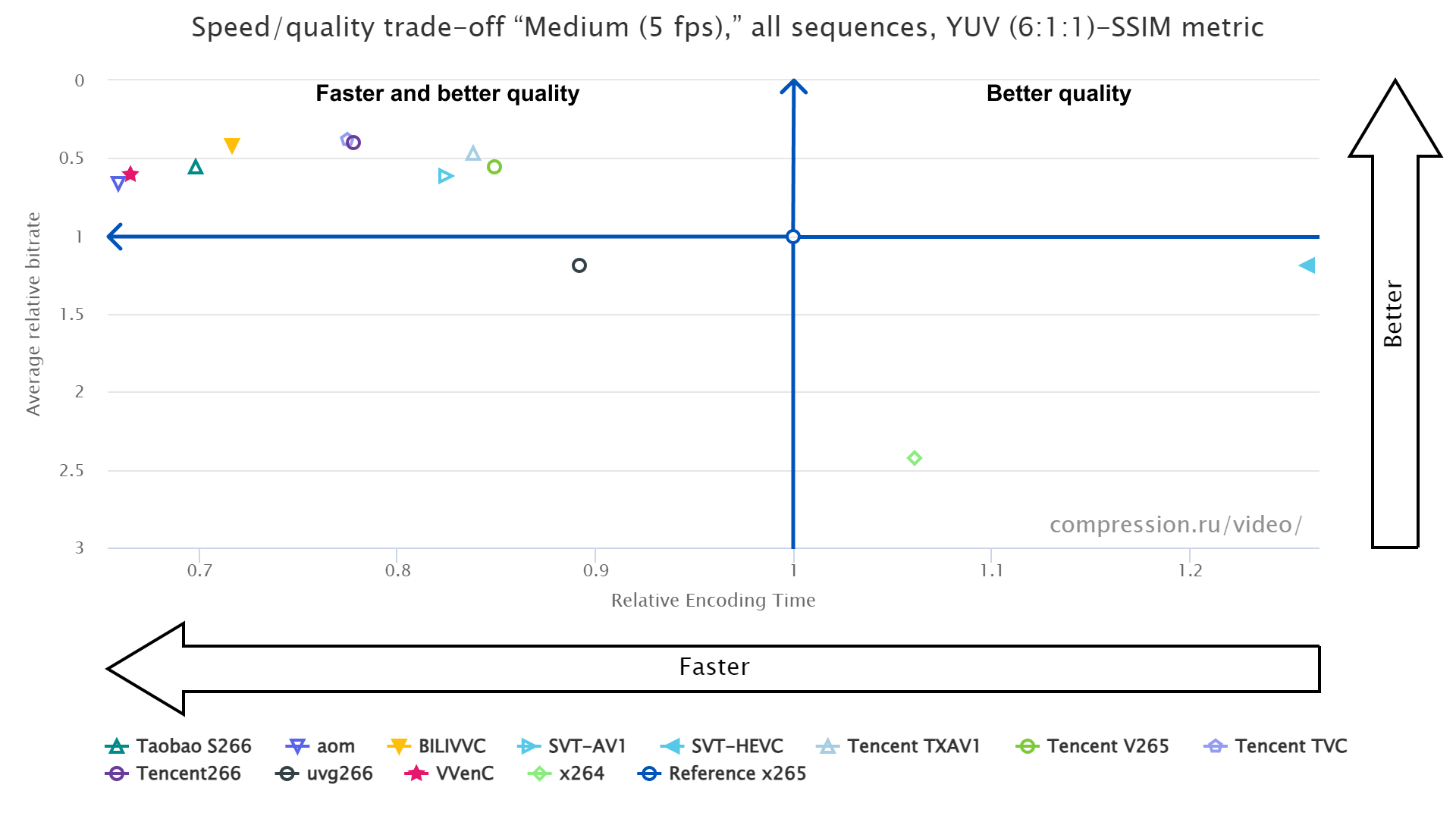

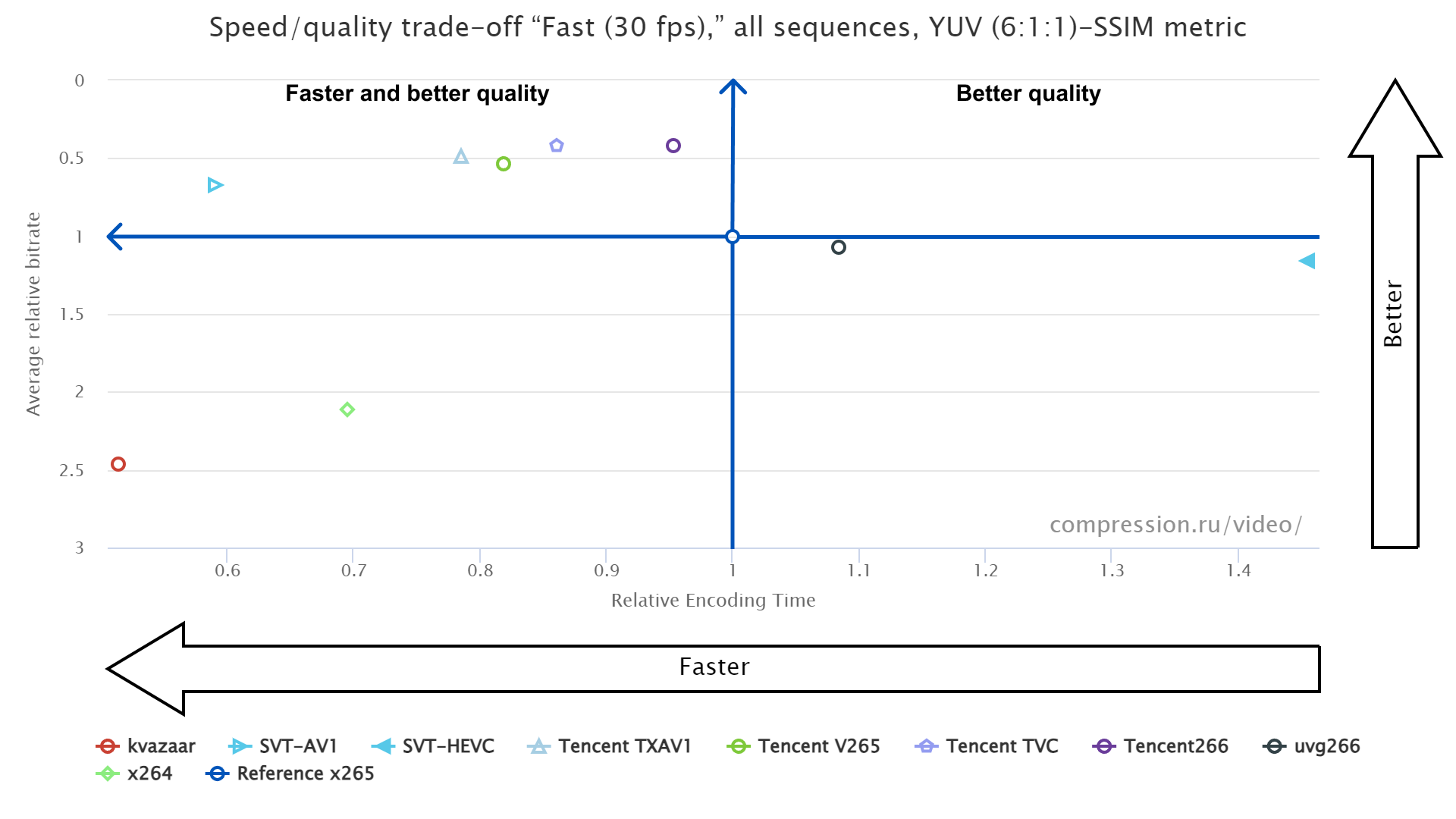

- The places below are given only for quality scores, not taking encoding speed into account

- Encoders with scores closer than 1% share one place

| Slow (1 fps) | Medium (5 fps) | Fast (30 fps) | |||||||||||||||||||

|

Best quality (YUV-SSIM 6:1:1) |

|

|

|

||||||||||||||||||

|

Best quality (YUV-PSNR avg.MSE 6:1:1) |

|

|

|

||||||||||||||||||

|

Best quality (Y-VMAF 0.6.1) |

|

|

|

||||||||||||||||||

|

Best quality (Y-VMAF-NEG 0.6.1) |

|

|

|

||||||||||||||||||

|

Best quality (YUV-Subjective) |

|

|

|

The biggest number of codecs took part in comparison of Slow encoding (1 fps). The winners vary for different objective quality metrics. The participants were rated using BSQ-rate (enhanced BD-rate) scores [1].

[1] A. Zvezdakova, D. Kulikov, S. Zvezdakov, D. Vatolin, "BSQ-rate: a new approach for video-codec performance comparison and drawbacks of current solutions," 2020.

[1] A. Zvezdakova, D. Kulikov, S. Zvezdakov, D. Vatolin, "BSQ-rate: a new approach for video-codec performance comparison and drawbacks of current solutions," 2020.

Download and buy report

| Free | Enterprise | |||

| Number of test sequences | 1 | 50+ | ||

| Test video descriptions | ||||

| Basic codec info | ||||

| Objective metrics | Only 4 metrics + Subjective | 20+ objective metrics | ||

| Test videos download | ||||

| Encoders presets description | ||||

| PDF report | 49 pages | 66 pages | ||

| HTML report | 20 interactive charts | 9000+ interacive charts | ||

| Price | Free | 950 USD | ||

| Download/Buy |

PDF & HTML reports FullHD Objective & Subjective (ZIP)

|

You will receive enterprise versions of all 2023-2024 reports (FullHD, Subjective, 4K)

|

Participated codecs

| Codec name | Use cases | Standard | Version | |

| 1 |

x264

(H.264)

x264 project |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.264/AVC | 0.164.x, Linux |

| 2 |

kvazaar

(H.265)

kvazaar project |

Fast (30 fps) | H.265/HEVC | v2.2.0, Linux |

| 3 |

Reference x265

(H.265)

MulticoreWare, Inc. |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.265/HEVC | 3.5+1-f0c1022b6, Linux |

| 4 |

SVT-HEVC

(H.265)

Open Visual Cloud |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.265/HEVC | v1.5.1, Linux |

| 5 |

Tencent V265 (H.265)

Tencent |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.265/HEVC | v1.6.8, Linux |

| 6 |

BILIVVC

(H.266)

Bilibili Inc. |

Slow (1 fps), Medium (5 fps) |

H.266/VVC | v2.0, Linux |

| 7 |

Nigz (H.266)

|

Slow (1 fps) | H.266/VVC | v1.0.0 , Linux |

| 8 |

Taobao S266 (H.266)

Alibaba TaoTian codec team |

Slow (1 fps), Medium (5 fps) |

H.266/VVC | v0.2, Linux |

| 9 |

Tencent266 (H.266)

Tencent |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.266/VVC | v0.3.0, Linux |

| 10 |

VVenC (H.266)

|

Slow (1 fps), Medium (5 fps) |

H.266/VVC | v1.12.0-rc2, Linux |

| 11 |

uvg266

(H.266)

Ultra Video Group |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

H.266/VVC | v0.8.0, Linux |

| 12 |

aom

(AV1)

AOMedia |

Slow (1 fps), Medium (5 fps) |

AV1 | AOMedia Project AV1 Encoder v3.8.0, Linux |

| 13 |

SVT-AV1

(AV1)

Open Visual Cloud |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

AV1 | v1.8.0, Linux |

| 14 |

Tencent TXAV1 (AV1)

Tencent |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

AV1 | v0.3.8, Linux |

| 15 |

TencentAVS3 (AVS3)

Tencent |

Slow (1 fps) | AVS3 | v0.2, Linux |

| 16 |

Tencent TVC (private)

Tencent |

Slow (1 fps), Medium (5 fps), Fast (30 fps) |

- | v0.2.0, Linux |

Comparison Rules

FullHD codec testing objectives

The main goal of this report is the presentation of a comparative evaluation of the quality of new and existing codecs using objective measures of assessment. The comparison was done using settings provided by the developers of each codec. Nevertheless, we required all presets to satisfy minimum speed requirement on the particular use case. The main task of the comparison is to analyze different encoders for the task of transcoding video – e.g., compressing video for personal use.

Test Hardware Characteristics

- CPU: Intel Core i7 12700K (Alder Lake)

- SSD: 1Tb

- RAM: 4x16GB (64GB)

- OS: Windows 11 x64, Ubuntu 22.04 LTS

For this platform we considered three key use cases with different speed requirements:

- Fast – 1080p@30fps

- Medium – 1080@5fps

- Slow – 1080p@1fps

See more on Call For Codecs 2023-2024 page

Videos

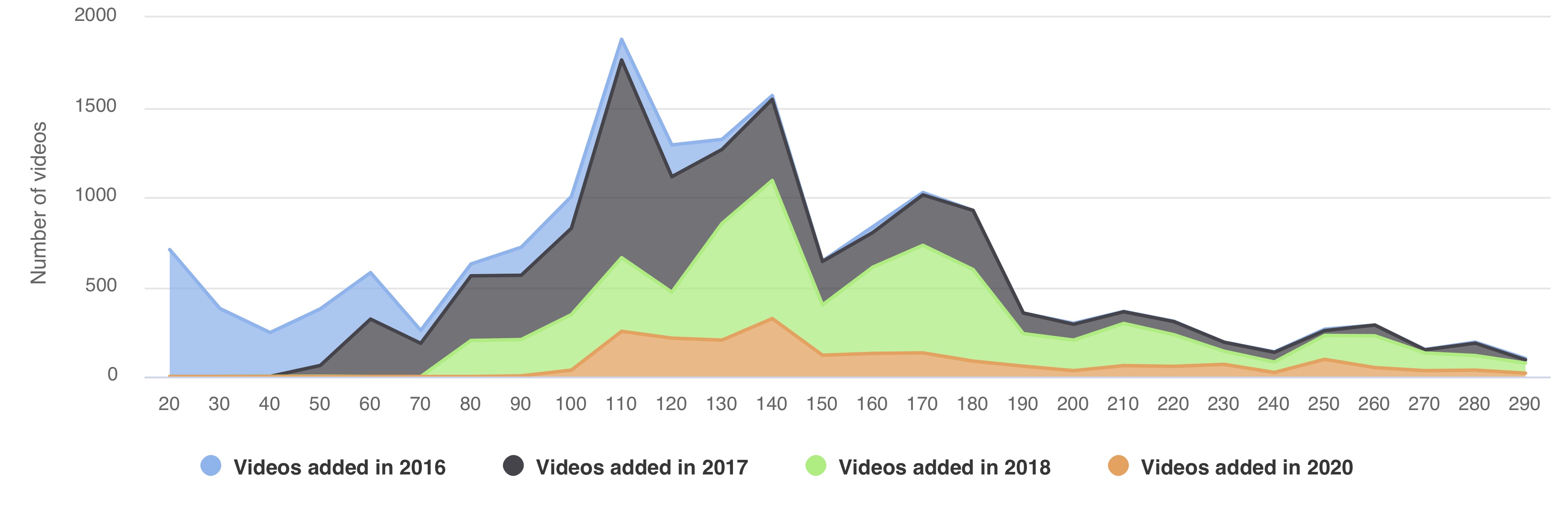

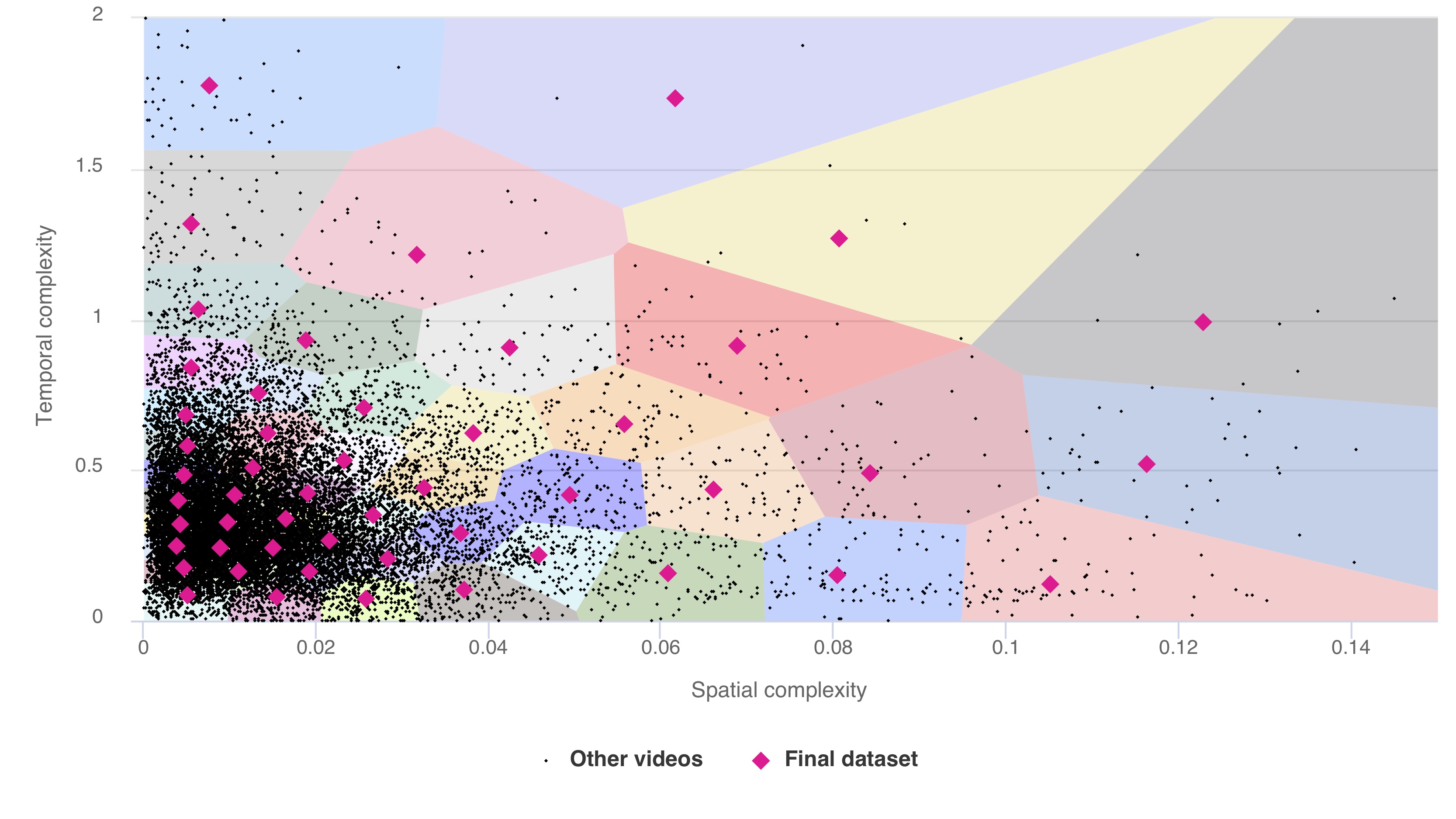

Videos for testing set were chosen from MSU video collection via a voting among comparison participants, organizers and an independend expert.

| Year | # FullHD videos | # FullHD samples | # 4K videos | # 4K samples | Total # of videos | Total # of samples |

| 2016 | 3 | 7 | 882 | 2902 | 885 | 2909 |

| 2017 | 1996 | 4638 | 1544 | 4561 | 3540 | 9299 |

| 2018 | 4342 | 10330 | 1946 | 5503 | 6288 | 15833 |

| 2020 | 4945 | 12402 | 2091 | 6016 | 7036 | 18418 |

Final video set consists of 51 sequences including new videos from Vimeo and media.xiph.org derf's collection.

Descriptions of all test videos are presented in a separate PDF provided with the report.

Subjective Comparison Methodology

For subjective quality measurements we used Subjectify.us crowdsourcing platform. We involved 10,800+ participants. After deleting replies from bots we got 529,171 pairwise answers. Bradley-Terry model was used to compute global rank.

To conduct an online crowdsourced comparison, we uploaded encoded streams to Subjectify.us. For better browser compatibility we performed transcoding with x264 and CRF=16.

The platform hired study participants and showed the upload streams to them in pairs. Each pair consisted of two variants of the same test video sequence encoded by various codecs at various bitrates. Videos from each pair were presented to study participant sequentially (i.e., one after another) in full-screen mode. After viewing each pair, participants were asked to choose the video with the best visual quality. They also had the option to play the videos again or to indicate that the videos have equal visual quality. We assigned each study participant 12 pairs, including 2 hidden quality-control pairs, and each received money reward after successfully completing the task. The quality-control pairs consisted of test videos compressed by the x264 encoder at 1 Mbps and 4 Mbps. Responses from participants who failed to choose the 4 Mbps sequence for one or more quality-control questions were excluded from further consideration.

In total we collected 529,171 valid answers from 10,800+ unique participants. To convert the collected pairwise results to subjective scores, we used the Bradley-Terry model [1]. Thus, each codec run received a quality score. We then linearly interpolated these scores to get continuous rate-distortion (RD) curves, which show the relationship between the real bitrate (i.e., the actual bitrate of the encoded stream) and the quality score. Section "RD Curves" shows these curves.

We obtained the subjective scores for this study using Subjectify.us. This platform enables researchers and developers to conduct subjective comparisons of image and video processing methods (e.g., compression, inpainting, denoising, matting, etc.) and carry out studies of human quality perception.

To conduct a study, researchers must apply the methods under comparison to a set of test videos (images), upload the results to Subjectify.us and write a task description for study participants. Subjectify.us handles all the laborious steps of a crowdsourced study: it recruits participants, presents uploaded content in a pairwise fashion, filters out responses from participants who cheat or are careless, analyzes collected results, and generates a study report with interactive plots. Thanks to the pairwise presentation, researchers need not invent a quality scale, as study participants just select the best option of the two.

The platform is optimized for comparison of large video files: it prefetches all videos assigned to a study participant and loads them into his or her device before asking the first question. Thus, even participants with a slow Internet connection wonпїЅt experience buffering events that might affect quality perception.

To try the platform in your research project, reach out to www.subjectify.us. This demo video shows an overview of the Subjectify.us workflow.

Codec Analysis and Tuning for Codec Developers and Codec Users

Computer Graphics and Multimedia Laboratory of Moscow State University:

- 20+ years working in the area of video codec analysis and tuning using objective quality metrics and subjective comparisons.

- 30+ reports of video codec comparisons and analysis (H.265, H.264, AV1, VP9, MPEG-4, MPEG-2, decoders' error recovery).

- Methods and algorithms for codec comparison and analysis development, separate codec's features and codec's options analysis.

Strong and Weak Points of Your Codec

- Deep encoder parts analysis (ME, RC on GOP, mode decision, etc).

- Weak and strong points for your encoder and complete information about encoding quality on different content types.

- Encoding Quality improvement by the pre and post filtering (including technologies licensing).

Independent Codec Estimation Comparing to Other Codecs for Different Use-cases

- Comparative analysis of your encoder and other encoders.

- We have direct contact with many codec developers.

- You will know place of your encoder between other newest well-known encoders (compare encoding quality, speed, bitrate handling, etc.).

Encoder Features Implementation Optimality Analysis

We perform encoder features effectiveness (speed/quality trade-off) analysis that could lead up to 30% increase in the speed/quality characteristics of your codec. We can help you to tune your codec and find best encoding parameters.Thanks

Special thanks to the following contributors of our previous comparisons

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

Contact Information

Subscribe to report updates

Other Materials

Video resources:

Server size: 8069 files, 1215Mb (Server statistics)

Project updated by

Server Team and

MSU Video Group

Project sponsored by YUVsoft Corp.

Project supported by MSU Graphics & Media Lab